All Posts

Driverless continues driving nowhere

This should drive nuts everybody.

The road to driverless remains undrivable

Surprising? Not at all.

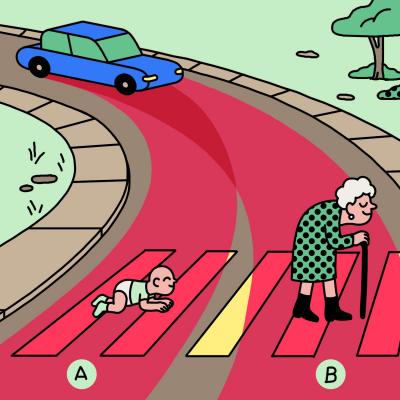

A new, overcomplicated step towards road safety

ISA, a.k.a. car safety made complicated. And fragile.

AI will never give you driverless cars

Unless it DOES become like the human brain, that is.

Autonomous cars will kill the car industry

Stay away from their stocks. Seriously.

Never buy a flying car...

Unless it’s not for you only.