Smarter guns for dumber gun control

The (tragically) funniest thing on gun control in a long time.

The news: Smart Weapons equipped with Ethical Artificial Intelligence

Last week I saw two articles about “smarter guns”: an op-ed titled “Use AI to stop carnage”, and a comment to that op-ed asking “Can smarter guns end the gun control debate? #RPI expert proposes ethical-AI technology as a solution”.

The rest of this paragraph is a collection of the best passages from those two articles (emphasis mine, when present), and the following ones sum up the thoughs and questions they rised in my mind (and I hope in yours too):

- Smarter weapons equipped with “ethical AI” could change the debate from the restrictions we place on [ownership of] weapons .. to the targets we allow: Should guns be able to shoot at school children, shoppers, concert-goers?

- with ethical-AI enabled weapons American politicians [could finally craft] meaningful legislation that establishes the targets we permit weapons to fire upon.

- [on its current trajectory, US] will have no reliable defense against mass shootings like the Charlie Hebdo tragedy, in which military-grade guns that can’t be obtained in France without the equivalent of FBI-level clearance were legally purchased in Slovakia, smuggled in [and then] unleashed upon journalists in Paris.

- Yet there is a solution: Artificial Intelligence (AI) of an ethical sort. Guns that are at once intelligent and ethically correct can put an end to the mass-shooting carnage.

- [If it were intelligent and ethically correct], the rifle used in the El Paso Walmart shooting woud know that it’s approaching the Walmart by car and would accordingly know that it has no business being used anytime soon. [Hence, it would fully lock itself]

- On the other hand, the guns in the hands of law enforcement officers.. know in whose hands they rest, and accordingly know that if they are trained on the would-be killer, they have every right to work well.

- People who don’t actually pose a threat sufficient to warrant being shot by police can’t be shot by smart, ethical guns

Please explain smart weapons equipped with ethical AI to me

The author of the op-ed is S. Bringsjord, professor in a departments of Cognitive Science and Computer Science and an expert in logic and philosophy, specializing in AI and reasoning. The comment to his proposal says he is “comfortable speaking with media and available to speak about the concept of ethical guns”. I look forward to reading his answers to my perplexities here.

Ethics by artificial intelligence and governments?

Those smart weapons would use ethical artificial intelligence to know, in any circumstance, that they have “no business being used anytime soon”.

I can’t wait to see the flow diagram of such algorithms. Because they would surely be 100% public, right? In the meantime, the “change the debate to the targets we allow” is enough to give me the creeps. Real big creeps.

“We” who? Face recognition algorithms of today have problems to recognize people of color as humans. The recent history of USA is full of cases of people who “warranted being shot by police”. And even in Italy, and many other countries, there are plenty of politicians who would love to push their definition of “targets”. In general, when they work, algorithms do what the people who regulate them tell them to do. Who decides who is allowed as target? The current government? The “majority”? Next:

As far as the US are concerned, that op-ed says that politicians should “work constructively” to make such weapons the only ones in town. But, as far I understand, the whole unique point of the “individual right theory,” of the Second Amendment used to refuse Gun Control is exactly to give people the right to resist their government when it harms them. Am I missing something here? How could US politicians even start working on this, until that Amendment stands as today?

How could smart weapons ever work RELIABLY?

A rifle knowing that is going shopping by car would “accordingly know that it has no business being used anytime soon”? OK, let’s accept it.

But where would it be usable then? On what criteria? What should it do when it has not enough data, or connectivity to ask home? I give for granted that in any such situation it would lock itself. Because otherwise, all a killer should do to make a carnage would be to wrap it in tin foil.

On the same note, this thing should be impossible to hack in any way. Assuming it’s possible, this means that it would not be maintainable in the field (that is “worth buying") by her owner alone. Is there one survivalist or “prepper” in the whole world that would pay more than nothing for something like this?

“Should guns be able to shoot at school children, shoppers, concert-goers?”

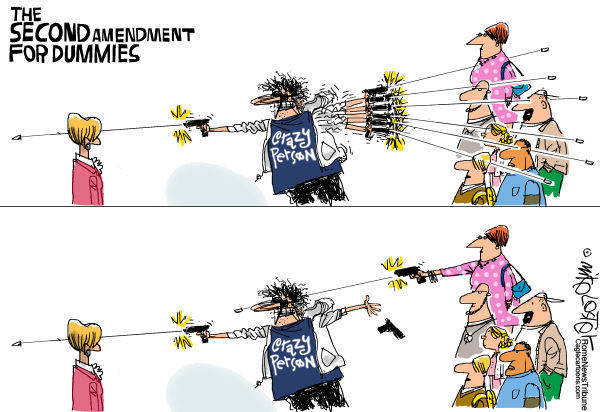

What if the bad guy is one of the shoppers in a mall, or a concert-goer? More on this below.

How would smart weapons prevent terrorism in the REAL world?

I fail to see how, or why, the Charlie Hebdo argument applies only in Europe, or only with “dumb” guns. How would the availability in US stores of nothing but “intelligent and ethically correct guns” prevent terrorists from smuggling in “dumb” guns from Mexico? Why should a terrorist not bring along, beside such guns, a jammer to prevent the “smart guns” of the cops to work? Or just place a bomb somewhere?

I have a strong feeling that nothing more than this would increase the clandestine sales of Kalashnikov, or 3D printers for untraceable, software-free guns.

Let’s assume they work. How could “ethical smart weapons” be ever accepted by:

Americans? (or everybody else)

Even ignoring reliability, a “smarter than you” weapon is, by definition, diametrically opposite to the whole American mithology of the lone hero. You know, the guy who, by manifest destiny, is always in full control and fully responsible for all his actions, because and only if, he has a gun and can use it.

This would also destroy the whole idea that the only way to stop bad guys with guns is good guys with guns, that is one big reason to buy guns, and open carry, wouldn’t it:

What is the point of buying a machine gun “just in case” you ever need take down a shooter smart enough to bring a “dumb” kalashnikov in the same mall as you?

Law Enforcement officials? Would cops accept to go into a building to neutralize terrorists with weapons that have their own, independent “right to work well” or not? Should they?

A cop doing his job, fully relaxed because his smart gun knows by itself whether it can fire or not.

</em></u>

the NRA and other gun lobbies?

<u><em><strong>CAPTION:</strong>

<a href="https://askepticalhuman.com/politics/2019/8/26/debunking-republicans-guns-dont-kill-people-amp-criminals-will-switch-to-knives-amp-other-weapons" target="_blank">But then criminals will switch to KNIVES!</a>

</em></u>

“Guns that are at once intelligent and ethically correct” destroys in one sentence the whole mantra that “guns don’t kill people, people kill people”. It makes guns be like cigarettes. Gun lobbies will fight this to death (pun unavoidable, sorry). Because if these things become mandatory, sales of all such new firearms will tank enough to actually make the USA a safest place. Maybe THAT is the plan. In that case, by all means go ahead. Still, this whole proposal seems to me like the obsession to make voting online secure, while keeping it both anonymous and free: very American, possibly in good faith, and still not even wrong. Right now, I honestly fail to understand how this whole concept could ever make sense to any of the stakeholders in this debate, in the US or elsewhere.