Tyranny of Metrics, teaching to the test and Open Data

Professor Jerry Muller, author of The Tyranny of Metrics, argues that measuring performance by numbers backfires.

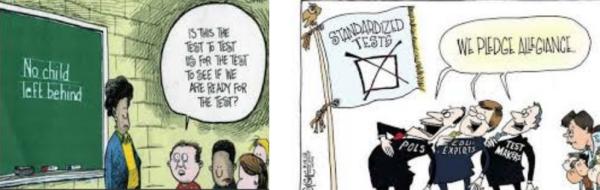

Teaching (or generally managing) to the test: a practice VERY easy to get wrong.

</em></u>

More and more organisations, Muller says, are today in the grip of “metric fixation”, defined as the belief that:

- it is both possible and desirable to replace professional judgment with numerical performance indicators based upon standardised data (metrics)

- the best way to motivate people within these organisations is by attaching rewards and penalties to their measured performance

Metric fixation has negative effects that Muller explains well. The first is gaming, that is, pushing people to put better metrics before their larger, ultimate purpose or responsibilities.

If judged by metrics only, for example, surgeons may refuse to operate on patients with more complex problems, whose surgical outcomes may decrease the surgeons metric scores.

Other negative effects of metric fixation are people caring to pursue only short term goals, or goals that will be measured simply because they are easy to measure, at the expense of other, more important ones that are not as easy to quantify, or take much longer to achieve. This is what happens, for example, in schools that only “teach to the test”.

Even the costs of collecting and properly evaluating metrics may not be negligible.

The most serious, long term problem of metric fixation, however, would be that it saps enthusiasm and discourages initiative, innovation and risk-taking. And that “the large-scale organisations of our society”, especially public administrations working for the common good, would suffer more from this than smaller groups with less constraints.

My own take on this? Fixation be damned, full speed ahead with Open Data. But…

In my opinion, that article by Prof. Muller does make very good points against metrics-centered solutionism and blind faith in algorithms. I do not, however, consider them enough of a reason to give up the demand and good practices for Open Data in, from or about (at least) public administrations, schools, NGOs and private companies.

In general, there are two main, high level reasons for Open Data: one is that opening data is the best way to extract economic value from them in the public interest, that is in a really open and fair manner. The other, which is much more related to “metric fixation”, is that Open Data is necessary to guarantee transparency and accountability especially (but not only) in Public Administrations.

There is a basic fact about the second reason that lots of people get wrong, so let’s clarify that first: the point of Open Data NEVER was (among people who do reason, at least) to make “people” (as in “generic people”) check by themselves certain claims. Assuming that any meaningful number of average citizens would have both the skills, motivation and time to personally analyze raw data from any public administration is as naive as expecting that those same people could ever be involved in maintenance of Free/Open Source Software.

But as far as transparency goes, the real point of Open Data always was, and must remain, another one. The point is to make sure that everybody with the right skills, especially individuals not on the payroll of governments or big corporations, could do one thing: independently look at the raw data, in order to validate any decisions taken on them, and denounce any irregularity.

This said, in general I suggest that the solution against metric fixation is to collect maybe even more data, but avoiding automatic decisions and making sure that every stakeholder is stimulated to analyze the data, rather than just suffer from them. In the case of “teaching to the test”, for example:

- how many teachers use nationwide test results for themselves, that is to compare their classes results with national averages in order to figure out by themselves, on the basis of their own professional experience and knowledge of their pupils, if those pupils should be supported differently?

- automatic decisions made by machines are bad. Automatic signalling of potential anomalies that deserve a human exam is usually good. Letting a computer decide that a teacher does not deserve a raise because her class scores are some decimal points “below average” is almost surely dumb. Having a computer give you signals like the one below, instead, is almost always a very smart, and long overdue thing:

“all the classes by THAT specific teacher in the last three years scored significantly below average. Go talk to him NOW, to figure out if he needs a rebuke or HELP”

Oh, and of course: privacy

Metric fixation can also have a very bad negative effect that is not mentioned in that article by prof. Muller. If you amass very large quantities of sensible data in one place, it will be much easier to copy and use them all in… unexpected ways. For details, ask Cambridge Analytica. So OK to collect data, but only after VERY careful analysis of which data they are, and whether there is a need to concentrate them.

Who writes this, why, and how to help

I am Marco Fioretti, tech writer and aspiring polymath doing human-digital research and popularization.

I do it because YOUR civil rights and the quality of YOUR life depend every year more on how software is used AROUND you.

To this end, I have already shared more than a million words on this blog, without any paywall or user tracking, and am sharing the next million through a newsletter, also without any paywall.

The more direct support I get, the more I can continue to inform for free parents, teachers, decision makers, and everybody else who should know more stuff like this. You can support me with paid subscriptions to my newsletter, donations via PayPal (mfioretti@nexaima.net) or LiberaPay, or in any of the other ways listed here.THANKS for your support!